A database backup and recovery plan is creating backup copies of data. When error (internal exception within the DBMS, Application (or transaction) failures, damage to disk storage devices, file system failures, tape degradation or damage, and deleted data files) occur that damages the integrity of the database, a backup copy of the data can be used as the basis to recover or restore the database. When problems impact the database, the DBA can use the copy backups and the database log to recover the database. Whatever the cause of the problem, the DBA must be able to recover data quickly so that the business can continue to operate. In this article, I will teach how simply we can backup and restore any particular database (PostgreSQL, MongoDB, MySQL)

PostgreSQL database backup and restore

pg_dump is a utility for backing up a PostgreSQL database. pg_dump can be used to backup an database or any specific table, then pg_restore can be used select which parts of the database are to be restored.

Scenario: I have a postgres database instance running at server 192.168.1.23 with one of the database named as “my_dbname”.

We need to store postgres server password in PGPASSWORD variable of the console

PGPASSWORD=my_pass (In Linux)

set PGPASSWORD=my_pass (In Windows)

pg_dump -h 192.168.1.23 -U my_username -Fc my_dbname > pg_my_dbname_backup

Make sure that the postgres binary pg_dump is in your environment variable. If not, you need to change your current directory to the postgres binary folder.

-Fc option denotes that we are using custom dump format. We can use following format according to the need

- -Fp for plain format

- -Fd for directory format

- -Ft for tar format

Also we can use -j <no_of_jobs> for parallel jobs executing which could be useful if dumping requires use executing time.

Instead of output redirection ” > pg_my_dbname_backup” we can specify dumped filename using -f option as

-f pg_my_dbname_backup

pg_dump also allows dumping of specific table using -t option as

-t my_tablename

For full options or full documentation on using pg_dump I recommend to go through official postgres pg_dump documentation page.

If you want to create a routine backup script this wiki has some tips on it which provides a demo script for windows user as:

@echo off

for /f “tokens=1-4 delims=/ ” %%i in (“%date%”) do (

set dow=%%i

set month=%%j

set day=%%k

set year=%%l

)

set datestr=%month%_%day%_%year%

echo datestr is %datestr%

set BACKUP_FILE=<NameOfTheFile>_%datestr%.backup

echo backup file name is %BACKUP_FILE%

SET PGPASSWORD=<PassWord>

echo on

pg_dump -h <HostName> -p 5432 -U <UserName> -F c -b -v -f %BACKUP_FILE% <DATABASENAME>

Let’s say we have some error or problem in our database “my_dbname” and we need to restore it back from our backup file.

First we need to delete the existing erroneous database and create a fresh empty database of same name.

dropdb -h 192.168.1.23 -U my_username my_dbname

createdb -h 192.168.1.23 -U my_username my_dbname

Now we got a fresh empty “my_dbname” database where we can restore our backup into it

pg_restore -h 192.168.1.23 -U my_username -d my_dbname pg_my_dbname_backup

we need to make sure that “pg_my_dbname_backup” is in current directory or could be replace with the path of the database file.

pg_restore can be used with option -C which could allow creation of the database also before restoring. i.e we can use option -C which would eliminate the use of createdb command before pg_restore

MongoDB database backup and restore

mongodump is a utility for creating a binary export of the contents of a mongo database. mongodump can export data from either mongod or mongos instances. mongodump captures the documents in the database in its backup data

I can create a backup directory called “mongodb_backup” using mongodump utility as

mongodump –host 192.168.1.23:27017 –out mongodb_backup

We can add

–db database_name to backup only database_name database

–collection collection_name to backup only collection_name collection

–username my_username –password my_password if need authentication for instance connection

–excludeCollection Collection_name to exclude any specific collection to be dumped

–archive my_mongo_dump.archive for dump in archive format

–gzip to dump in gzip format rather than directory

More on mongodump can be found in official documentation

Same way we can restore of dumped (backed up) database into running mongo instance using mongorestore as

mongorestore mongodb_backup

mongorestore can be used with same options as provided above for mongodump

More on mongorestore can be found in official documentation

MySQL database backup and restore

MySQL too provides a tool mysqldump to dump/backup any database

mysqldump -h 192.168.1.23 -u my_username -p my_password my_dbname > mysql_backup.sql

If we need a gzip format we can pipe it to our gzip command before output redirection as

mysqldump -h 192.168.1.23 -u my_username -p my_password my_dbname | gzip -9 > mysql_backup.sql.gzip

For restoring mysql database we need to make sure that restoring database exist and is empty.

mysql -u my_username -p my_password my_dbname < mysql_backup.sql

mysqlimport can also be used to import dumped mysql file as

mysqlimport -u my_username -p my_password my_dbname mysql_backup.sql

If we have a gzip format of dumped file we need to extract it before restore as

gunzip < mysql_backup.sql.gzip | mysql -u my_username -p my_password my_dbname

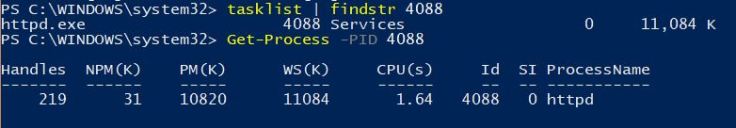

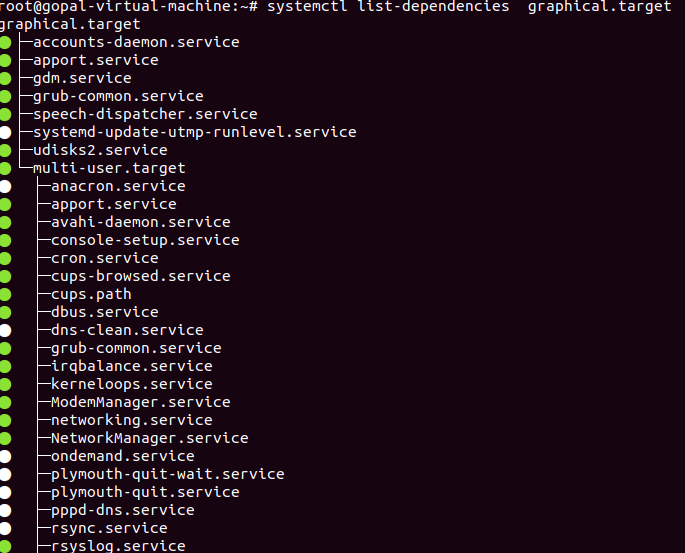

systemctl start/stop httpd.service

systemctl enable/disable httpd.service

systemctl mask/unmak httpd.service #Mask service wont be started unless unmasked

systemctl is-active/is-enabled/is-failed httpd.service

systemctl reload-or-restart sshd.service

systemctl status httpd.service #Service status, CGroup hierarchy and initial few logs related to service

systemctl poweroff/reboot/halt/rescue

systemctl start/stop httpd.service

systemctl enable/disable httpd.service

systemctl mask/unmak httpd.service #Mask service wont be started unless unmasked

systemctl is-active/is-enabled/is-failed httpd.service

systemctl reload-or-restart sshd.service

systemctl status httpd.service #Service status, CGroup hierarchy and initial few logs related to service

systemctl poweroff/reboot/halt/rescue

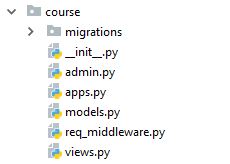

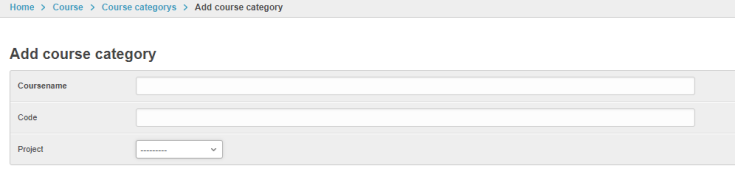

I can now successfully setup admin interface for managing my mysql database.

I can now successfully setup admin interface for managing my mysql database.